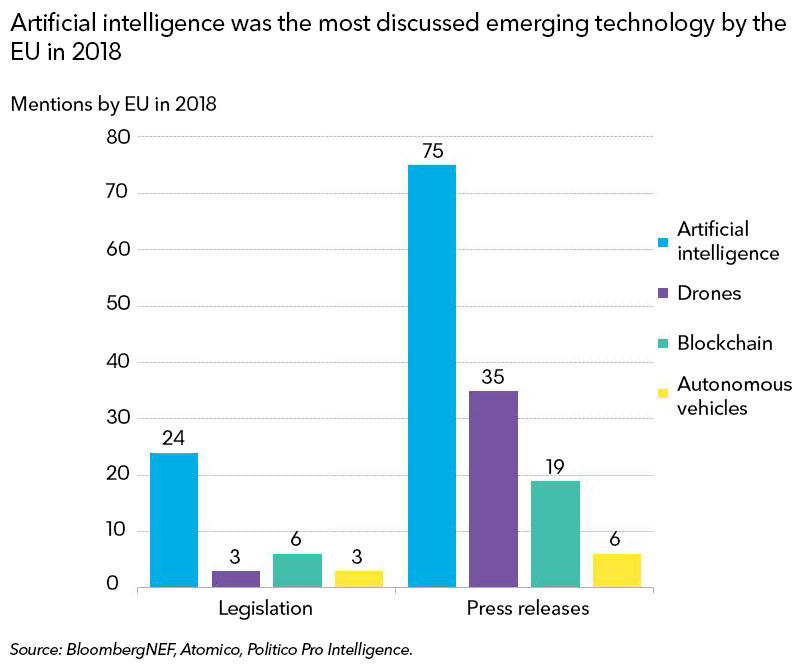

Artificial intelligence, and in particular what is termed machine learning, has been getting a lot more attention from two fronts: companies are paying much more heed as AI becomes mainstream, and European institutions, including the Parliament and the Commission, are preoccupied with regulations for privacy and, most recently, are developing a unified EU-wide strategy for AI.

European legislators are very animated by the latest developments in AI, such as neural networks and so-called deep learning, which need vast and diverse datasets to function. Their actions, however, are not limited to institutional hand-wringing. The EU announced draft voluntary guidelines for developers of AI in December and received more than 500 public comments. Final guidelines will be released later this month.

The EU’s new voluntary rules are intended to direct entrepreneurs to develop intentionally ethical AI while promoting growth in the sector. They include two components: firstly, respecting the right to privacy of citizens and their personal data, addressed largely in last year’s General Data Privacy Regulation, and, secondly, technically robust AI that eliminates bias and promotes equivalent access to data between similar parties.

Europe knows it is lagging the U.S. and China in AI development overall. There’s been organic development of AI in the U.S., and China has vast personal datasets on its citizens to train algorithms – the country has even published targets to nudge new companies into the sector.

One of the EU’s primary concerns with current AI is that it offers flawed outcomes due to the data upon which it was trained. Biased AI can be difficult to identify because of the lack of transparency in how it makes these decisions.

Not all datasets are created equal. The May 2018 enactment of the GDPR in the EU limited the unauthorized collection, storage and sale of personal data. China uses vast amounts of personal data to train algorithms, such as facial recognition images, while the GDPR has made it more difficult for entrepreneurs to access these personal datasets without specific consent or opt-in.

The second component of the new EU guidelines advocates for the reduction of biased AI. Because the output of algorithms relies on the datasets used for training, this component may be harder to achieve without robust and complete datasets, in particular, if specific groups opt-out of the collection of their personal data under GDPR.

The EU is exploring data exchanges that remove personal identifiers from the data through so-called anonymization and aggregation techniques. The bloc has already agreed to data-export and exchange guidelines with Japan, which has similar personal data privacy regulations. Separately, blockchain technology has been proposed as the basis for a decentralized data marketplace.

The EU’s guidelines state that “ethical and trustworthy” AI is also necessary to prevent anxiety over the rise of artificial general intelligence. For example, the EU encourages building transparency into algorithms to explain how AI makes certain decisions, which will help increase trust in the outcomes of potential tasks such as automated control of critical systems.

While AI is still limited in its scope and only solves problems it is trained for, the new guidelines attempt to steer developers toward intentionally limiting the perceived risks of general AI.

Clients can access Tech Radar on the Terminal or on web .